TLDR

- Schwartz said rare outcomes can occur even when the original odds were set very low.

- He linked the point to a past data center outage that needed improvised recovery steps.

- The old systems depended on DNS, TFTP, routers, and reboot order during manual restoration efforts.

- After three restart loops, more services returned, and the network finally ran as expected again.

- The thread drew attention to risk calls, old infrastructure, and uncertain system recovery planning online.

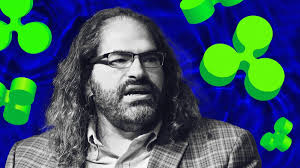

David “JoelKatz” Schwartz discussed risk, rare events, and old systems in a recent online thread. His comments linked a probability debate with a past data center recovery story.

The discussion matters to crypto readers because networks depend on careful risk calls. Old systems, delayed restarts, and weak recovery plans can create hard choices.

Schwartz Says Rare Events Can Still Fit Low Odds

Schwartz responded to a post about selling an asset for $1.05. The post said the asset later reached $2,368, despite low expected odds.

He wrote, “I’m still not sure the odds of that happening really were more than 1% at the time.” The comment showed doubt, but it did not reject the earlier risk view.

Schwartz then explained a key point about probability. A rare event can happen, and the original estimate can still be fair.

He said that one event alone cannot settle the question. More cases are needed before judging whether the estimate was right.

Old Data Center Story Adds Context

The thread then moved to a story about old computer systems. Another post described a system needing a patch, but also needing a reboot.

The post said the machine had not restarted since 2019. It also said, “nobody is sure it will come back up.”

Schwartz said the story reminded him of an ISP where he once worked. The data center lost full power after someone hit an emergency power shutoff.

The team had no clear recovery plan at that time. So, staff brought machines online one by one in an assumed order.

They started with lower-level systems and moved toward higher-level services. However, many services depended on each other before they could start.

Reboot Cycles Solved What Planning Missed

Schwartz said some machines had to start without DNS working. That raised a risk that startup scripts might fail.

He also described routers that loaded firmware by TFTP. Yet some TFTP servers might not start if DNS was down.

Because of these links, the team used repeated restart cycles. They rebooted machines in a loop, hoping each pass fixed more missing parts.

Schwartz said the method worked after three full loops. Fewer things broke after each cycle, and the systems returned to normal.

He added that the team never created a better recovery plan later. The account showed how real systems can survive through trial, order, and repeated checks.